Discover how you can overcome a shortage of labeled data by augmenting your dataset thanks to the estimated sensors’ accuracy. This article is illustrated by a real-life implementation on one industrial case, using Tensorflow and Scikit-learn.

Deep Learning algorithms do require a lot of data!(euphemism…)

When the available labeled data is not sufficient to reach a good accuracy level, a standard technic used for image recognition is to create “augmented pictures”.

The principle is plain simple: instead of feeding only one picture of a dog to your deep learning algorithm, you might create artificial pictures of the same dog with more or less subtle changes which will help your model fine-tune its network weights and, ultimately, its accuracy.

Classic modifications of the original picture will come into the form of:

- symmetries (horizontal, vertical)

- rotations (angle)

- width or height shift

- zoom, shear, color or brightness change

They can be easily implemented with a few lines in Tensorflow:

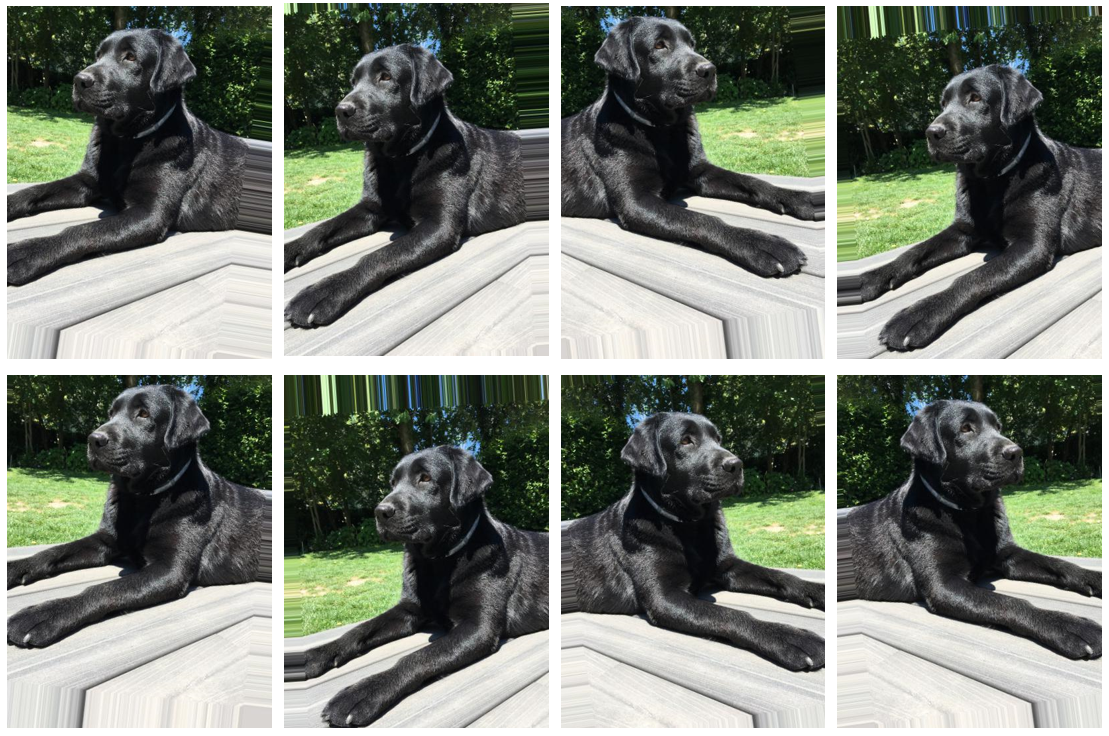

Comète, my lovely dog 🐶 (original picture)

from tensorflow.keras.preprocessing.image import ImageDataGenerator

train_datagen = ImageDataGenerator(

rotation_range=10,

horizontal_flip=True,

vertical_flip=False,

width_shift_range=0.1,

height_shift_range=0.1,

#zoom_range=0.2,

#brightness_range=(0.1, 0.9),

#shear_range=15

)

I am always kind to my dog (even virtually!) so I limited the transformations on purpose!

Augmented Comète clones!

**It is easy to understand that this mechanism is legitimate for pictures as these changes do not affect the main subject **and it will help your model also interpret dog pictures that have different orientations, colors, etc…

Is it possible to apply this technic on industrial process data to reach a higher level of accuracy on predictions?

Let’s explore one use-case I have worked on in the past. The dataset (sanitized, normalized and preprocessed) is stored here and has a 250 x 52 shape.

#towards-data-science #deep-learning #tensorflow #scikit-learn #data-preprocessing #data analysis