Using Keras & Tensorflow with AMD GPU

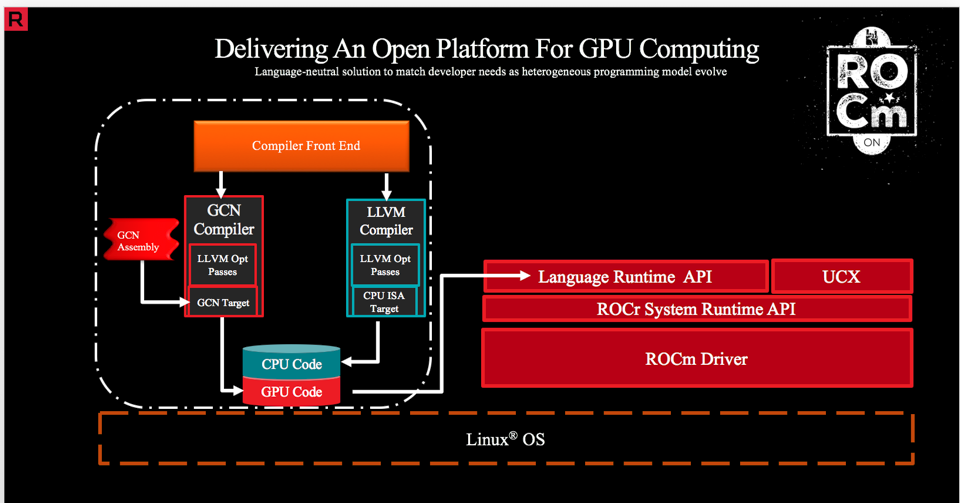

AMD is developing a new HPC platform, called ROCm. Its ambition is to create a common, open-source environment, capable to interface both with Nvidia (using CUDA) and AMD GPUs (further information).

This tutorial will explain how to set-up a neural network environment, using AMD GPUs in a single or multiple configurations.

On the software side: we will be able to run Tensorflow v1.12.0 as a backend to Keras on top of the ROCm kernel, using Docker.

To install and deploy ROCm are required particular hardware/software configurations.

Hardware requirements

The official documentation (ROCm v2.1) suggests the following hardware solutions.

Supported CPUs

Current CPUs which support PCIe Gen3 + PCIe Atomics are:

- AMD Ryzen CPUs;

- The CPUs in AMD Ryzen APUs;

- AMD Ryzen Threadripper CPUs

- AMD EPYC CPUs;

- Intel Xeon E7 v3 or newer CPUs;

- Intel Xeon E5 v3 or newer CPUs;

- Intel Xeon E3 v3 or newer CPUs;

- Intel Core i7 v4 (i7–4xxx), Core i5 v4 (i5–4xxx), Core i3 v4 (i3–4xxx) or newer CPUs (i.e. Haswell family or newer).

- Some Ivy Bridge-E systems

Supported GPUs

ROCm officially supports AMD GPUs that use the following chips:

- GFX8 GPUs

- “Fiji” chips, such as on the AMD Radeon R9 Fury X and Radeon Instinct MI8

- “Polaris 10” chips, such as on the AMD Radeon RX 480/580 and Radeon Instinct MI6

- “Polaris 11” chips, such as on the AMD Radeon RX 470/570 and Radeon Pro WX 4100

- “Polaris 12” chips, such as on the AMD Radeon RX 550 and Radeon RX 540

- GFX9 GPUs

- “Vega 10” chips, such as on the AMD Radeon RX Vega 64 and Radeon Instinct MI25

- “Vega 7nm” chips (Radeon Instinct MI50, Radeon VII)

Software requirements

On the software side, the current version of ROCm (v2.1), is supported only in Linux-based systems.

The ROCm 2.1.x platform supports the following operating systems:

Ubuntu 16.04.x and 18.04.x (Version 16.04.3 and newer or kernels 4.13 and newer)

CentOS 7.4, 7.5, and 7.6 (Using devtoolset-7 runtime support)

RHEL 7.4, 7.5, and 7.6 (Using devtoolset-7 runtime support)

Testing setup

The following hardware/software configuration has been used, by the author, to test and validate the environment:

HARDWARE

- CPU: Intel Xeon E5–2630L

- RAM: 2 x 8 GB

- Motherboard: MSI X99A Krait Edition

- GPU: 2 x RX480 8GB + 1 x RX580 4GB

- SSD: Samsung 850 Evo (256 GB)

- HDD: WDC 1TB

SOFTWARE

- OS: Ubuntu 18.04 LTS

ROCm installation

In order to get everything working properly, is recommended to start the installation process, within a fresh installed operating system. The following steps are referring to Ubuntu 18.04 LTS operating system, for other OS please refer to the official documentation.

The first step is to install ROCm kernel and dependencies:

Update your system

Open a new terminal CTRL + ALT + T

sudo apt update

sudo apt dist-upgrade

sudo apt install libnuma-dev

sudo reboot

Add the ROCm apt repository

To download and install ROCm stack is required to add related repositories:

wget -qO - http://repo.radeon.com/rocm/apt/debian/rocm.gpg.key | sudo apt-key add -

echo 'deb [arch=amd64] http://repo.radeon.com/rocm/apt/debian/ xenial main' | sudo tee /etc/apt/sources.list.d/rocm.list

Install ROCm

Is now required to update apt repository list and install rocm-dkms meta-package:

sudo apt update

sudo apt install rocm-dkms

Set permissions

The official documentation suggests creating a new video group in order to have access to GPU resources, using the current user.

Firstly, check the groups in your system, issuing:

groups

Then add yourself to the video group:

sudo usermod -a -G video $LOGNAME

You may want to ensure that any future users you add to your system are put into the “video” group by default. To do that, you can run the following commands:

echo 'ADD_EXTRA_GROUPS=1' | sudo tee -a /etc/adduser.conf

echo 'EXTRA_GROUPS=video' | sudo tee -a /etc/adduser.conf

Then reboot the system:

reboot

Testing ROCm stack

Is now suggested to test the ROCm installation issuing the following commands.

Open a new terminal CTRL + ALT + T , issue the following commands:

/opt/rocm/bin/rocminfo

the output should look as follows: link

Then double-check issuing:

/opt/rocm/opencl/bin/x86_64/clinfo

The output should look like that: link

Official documentation finally suggests to add ROCm binaries to PATH:

echo 'export PATH=$PATH:/opt/rocm/bin:/opt/rocm/profiler/bin:/opt/rocm/opencl/bin/x86_64' | sudo tee -a /etc/profile.d/rocm.sh

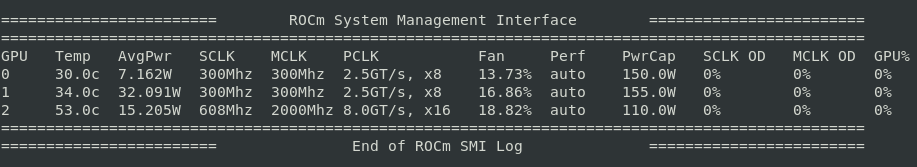

Congratulations! ROCm is properly installed in your system and the command:

rocm-smi

should display your hardware information and stats:

rocm-smi command output

Tensorflow Docker

The fastest and more reliable method to get ROCm + Tensorflow backend to work is to use the docker image provided by AMD developers.

Install Docker CE

First, is required to install Docker. In order to do that, please follow the instructions for Ubuntu systems:

Tip: To avoid inserting sudo docker <command> instead of docker <command> it’s useful to provide access to non-root users: Manage Docker as a non-root user.

Pull ROCm Tensorflow image

It’s now time to pull the Tensorflow docker provided by AMD developers.

Open a new terminal CTRL + ALT + T and issue:

docker pull rocm/tensorflow

after a few minutes, the image will be installed in your system, ready to go.

Create a persistent space

Because of the ephemeral nature of Docker containers, once a docker session is closed all the modifications and files stored, will be deleted with the container.

For this reason is useful to create a persistent space in the physical drive for storing files and Jupyter notebooks. The simpler method is to create a folder to initialize with a docker container. To do that issue the command:

mkdir /home/$LOGNAME/tf_docker_share

This command will create a folder named tf_docker_share useful for storing and reviewing data created within the docker.

Starting Docker

Now, execute the image in a new container session. Simply send the following command:

docker run -i -t \

--network=host \

--device=/dev/kfd \

--device=/dev/dri \

--group-add video \

--cap-add=SYS_PTRACE \

--security-opt seccomp=unconfined \

--workdir=/tf_docker_share \

-v $HOME/tf_docker_share:/tf_docker_share rocm/tensorflow:latest /bin/bash

The docker is in execution on the directory /tf_docker_share and you should see something similar to:

it means that you are now operating inside the Tensorflow-ROCm virtual system.

Installing Jupyter

Jupyter is a very useful tool, for the development, debug and test of neural networks. Unfortunately, it’s not currently installed, as default, on the Tensorflow-ROCm, Docker image, published by ROCm team. It’s therefore required to manually install Jupyter.

In order to do that, within Tensorflow-ROCm virtual system prompt,

1. Issue the following command:

pip3 install jupyter

It will install the Jupyter package into the virtual system. Leave open this terminal.

2. Open a new terminal CTRL + ALT + T .

Find the CONTAINER ID issuing the command:

docker ps

A table, similar to the following should appear:

Container ID on the left

The first column represents the Container ID of the executed container. Copy that because it’s necessary for the next step.

3. It’s time to commit, to permanently write modifications of the image. From the same terminal, execute:

docker commit <container-id> rocm/tensorflow:<tag>

where tag value is an arbitrary name for example personal .

4. To double check that the image has been generated correctly, from the same terminal, issue the command:

docker images

that should result generate a table similar to the following:

It’s important to note that we will refer to this newly generated image for the rest of the tutorial.

The new docker run command, to use, will look like:

docker run -i -t \

--network=host \

--device=/dev/kfd \

--device=/dev/dri \

--group-add video \

--cap-add=SYS_PTRACE \

--security-opt seccomp=unconfined \

--workdir=/tf_docker_share \

-v $HOME/tf_docker_share:/tf_docker_share rocm/tensorflow:<tag> /bin/bash

where once again, tag value is arbitrary, for example personal .

Entering Jupyter notebook environment

We can finally enter the Jupyter environment. Inside it, we will create the first neural network using Tensorflow v1.12 as backend and Keras as frontend.

Cleaning

Firstly close all the previously executing Docker containers.

- Check the already open containers:

docker ps

2. Close all the Docker container/containers:

docker container stop <container-id1> <container-id2> ... <container-idn>

3. Close all the already open terminals.

Executing Jupyter

Let’s open a new terminal CTRL + ALT + T :

- Run a new Docker container (

personaltag will be used as default):

docker run -i -t \

--network=host \

--device=/dev/kfd \

--device=/dev/dri \

--group-add video \

--cap-add=SYS_PTRACE \

--security-opt seccomp=unconfined \

--workdir=/tf_docker_share \

-v $HOME/tf_docker_share:/tf_docker_share rocm/tensorflow:personal /bin/bash

You should be logged into Tensorflow-ROCm docker container prompt.

Logged into docker container

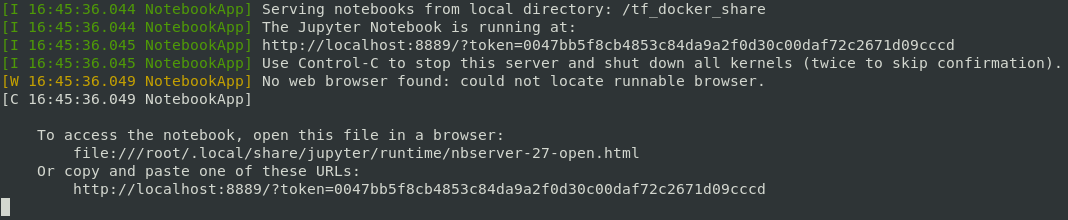

2. Execute the Jupyter notebook:

jupyter notebook --allow-root --port=8889

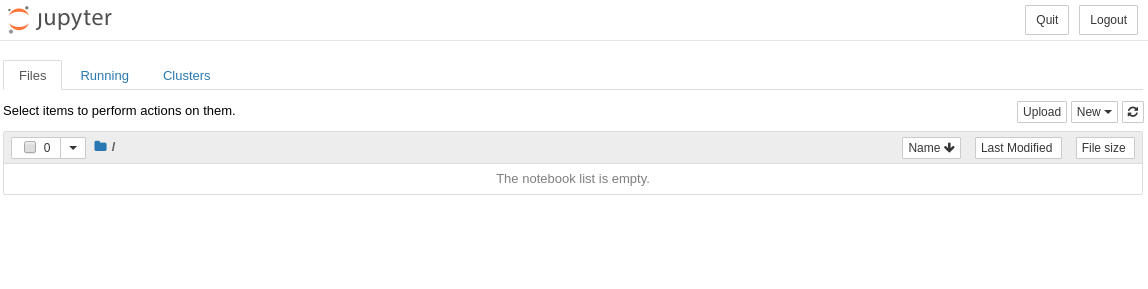

a new browser window should appear, similar to the following:

Jupyter root directory

If the new tab does not appear automatically, on the browser, go back to the terminal where jupyter notebook command has been executed. On the bottom, there is a link to follow (press: CTRL + left mouse button on it) then, a new tab in your browser redirects you to Jupyter root directory.

Typical Jupyter notebook output. The example link is on the bottom

Train a neural network with Keras

In the last section, of this tutorial, we will train a simple neural network on the MNIST dataset. We will firstly build a fully connected neural network.

Fully connected neural network

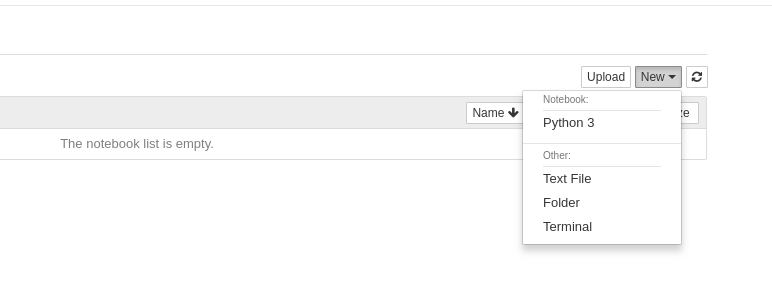

Let’s create a new notebook, by selecting Python3 from the upper-right menu in Jupyter root directory.

The upper-right menu in Jupyter explorer

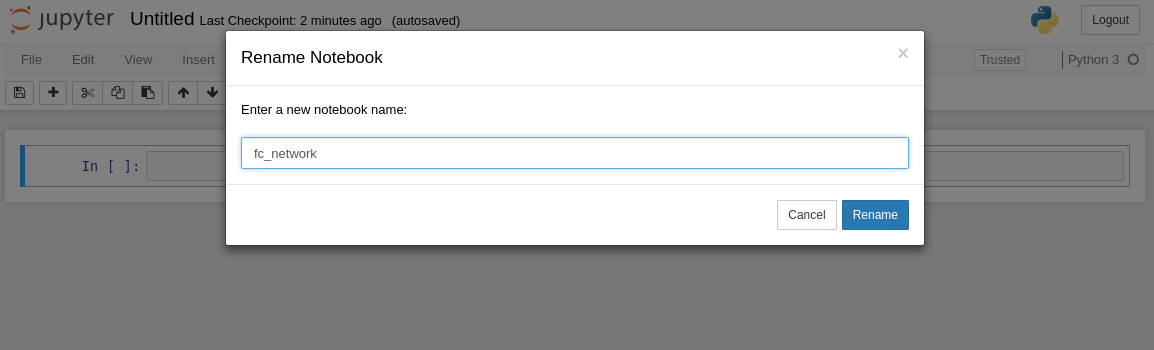

A new Jupiter notebook should pop-up in a new browser tab. Rename it tofc_network by clicking Untitled on the upper left corner of the window.

Notebook renaming

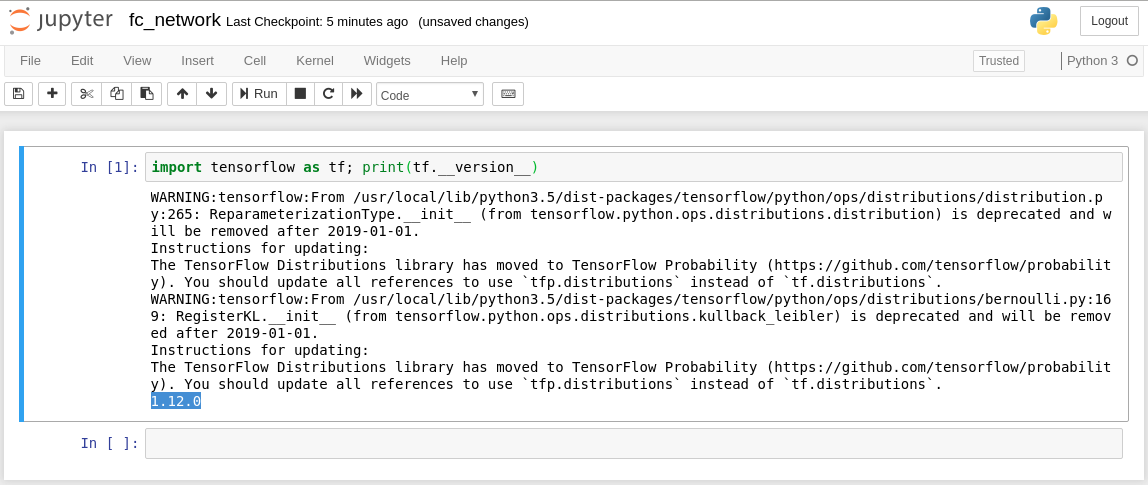

Let’s check Tensorflow backend. On the first cell insert:

import tensorflow as tf; print(tf.__version__)

then press SHIFT + ENTER to execute. The output should look like:

Tensorflow V1.12.0

We are using Tensorflow v1.12.0.

Let’s import some useful functions, to use next:

from tensorflow.keras.datasets import mnist

from tensorflow.keras.models import Sequential

from tensorflow.keras.layers import Dense, Dropout

from tensorflow.keras.optimizers import RMSprop

from tensorflow.keras.utils import to_categorical

Let’s set batch size, epochs and number of classes.

batch_size = 128

num_classes = 10

epochs = 10

We will now download and preprocess inputs, loading them into system memory.

# the data, split between train and test sets

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train = x_train.reshape(60000, 784)

x_test = x_test.reshape(10000, 784)

x_train = x_train.astype('float32')

x_test = x_test.astype('float32')

x_train /= 255

x_test /= 255

print(x_train.shape[0], 'train samples')

print(x_test.shape[0], 'test samples')

# convert class vectors to binary class matrices

y_train = to_categorical(y_train, num_classes)

y_test = to_categorical(y_test, num_classes)

It’s time to define the neural network architecture:

model = Sequential()

model.add(Dense(512, activation='relu', input_shape=(784,)))

model.add(Dropout(0.2))

model.add(Dense(512, activation='relu'))

model.add(Dropout(0.2))

model.add(Dense(num_classes, activation='softmax'))

We will use a very simple, two-layer fully-connected network, with 512 neurons per layer. It’s also included a 20% drop probability on the neuron connections, in order to prevent overfitting.

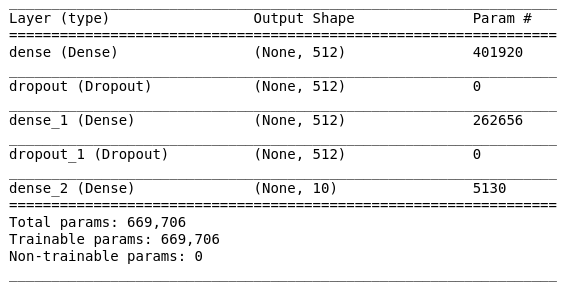

Let’s print some insight into the network architecture:

model.summary()

Network architecture

Despite the simplicity of the problem, we have a considerable number of parameters to train (almost ~700.000), it means also considerable computational power consumption. Convolutional Neural Networks will solve the issue reducing computational complexity.

Now, compile the model:

model.compile(loss='categorical_crossentropy',

optimizer=RMSprop(),

metrics=['accuracy'])

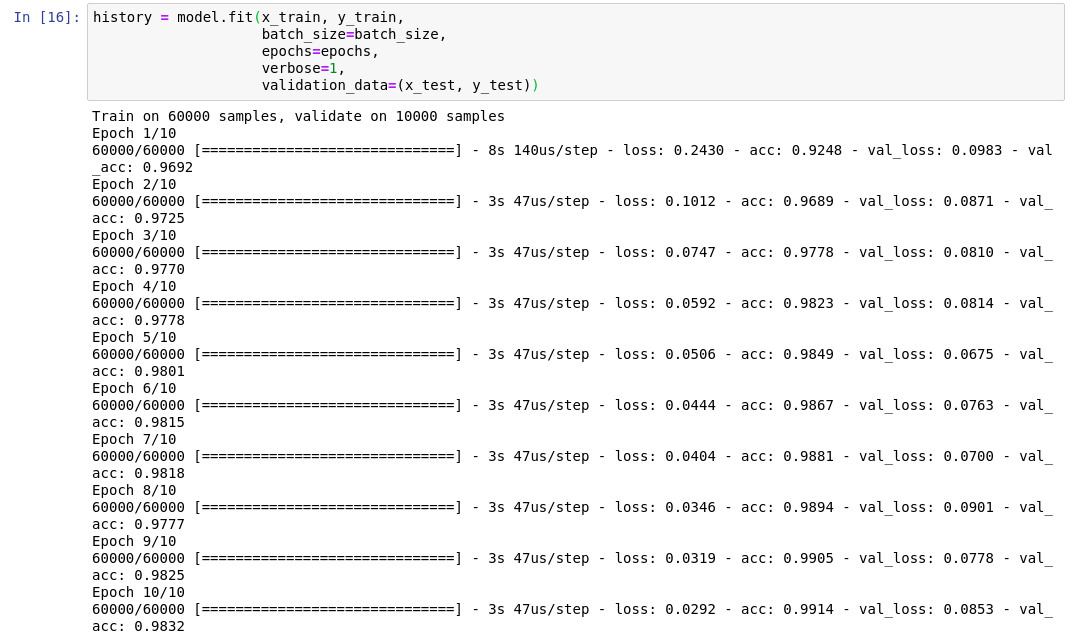

and start training:

history = model.fit(x_train, y_train,

batch_size=batch_size,

epochs=epochs,

verbose=1,

validation_data=(x_test, y_test))

Training process

The neural network has been trained on a single RX 480 with a respectable 47us/step. For comparison, an Nvidia Tesla K80 is reaching 43us/step but is 10x more expensive.

Multi-GPU Training

As an additional step, if your system has multiple GPUs, is possible to leverage Keras capabilities, in order to reduce training time, splitting the batch among different GPUs.

To do that, first it’s required to specify the number of GPUs to use for training by, declaring an environmental variable (put the following command on a single cell and execute):

!export HIP_VISIBLE_DEVICES=0,1,...

Numbers from 0 to … are defining which GPU to use for training. In case you want to disable GPU acceleration simply:

!export HIP_VISIBLE_DEVICES=-1

It’s also necessary to add multi_gpu_model function.

As an example, if you have 3 GPUs, the previous code will modify accordingly.

Conclusions

That concludes this tutorial. The next step will be to test a Convolutional Neural Network on the MNIST dataset. Comparing performances in both single and multi-GPU.

What’s relevant here, is that AMD GPUs perform quite well under computational load at a fraction of the price. GPU market is changing rapidly and ROCm gave to researchers, engineers, and startups, very powerful, open-source tools to adopt, lowering upfront costs in hardware equipment.

#tensorflow #keras