You have cleaned your data and removed all correlating features. You have also visualized your dataset and know the class labels are separable. You have also tuned your hyper-parameters. That’s great, but why isn’t your model performing well?

What is Model Stacking?

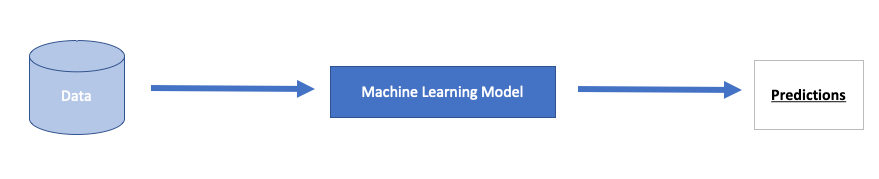

Did you try stacking your models? Traditionally, we have modeled our data with a single algorithm. That might be a Logistic Regression, Gaussian Naive Bayes, or XGBoost.

Image by Author: Traditional ML Model

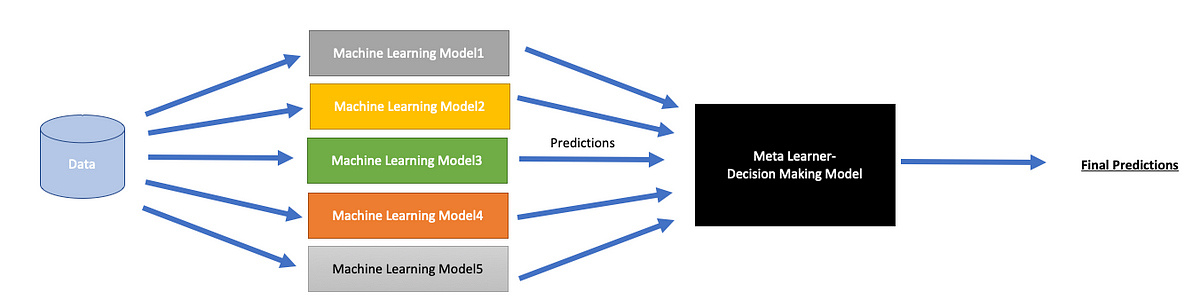

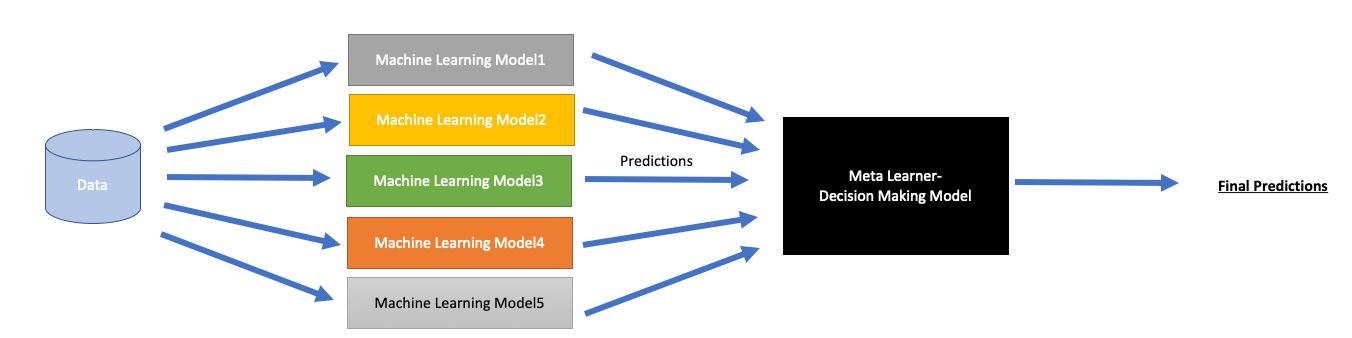

Here’s what an ensemble stacking model does:

Image by Author: Stacked ML Model

Meta Learners

An algorithm that is used to combine the base estimators is called the meta learner. We can determine how we want this algorithm to respond to different predictions from other models(classifiers in this case). It can be:

- Predictions of estimators

- Predictions as well as the original training data

#model-stacking #machine-learning #classification #ensemble-learning #deep learning