TL;DR This is the first in a [series of posts] where I will discuss the evolution and future trends in the field of deep learning on graphs.

Deep learning on graphs, also known as Geometric deep learning (GDL) [1], Graph representation learning (GRL), or relational inductive biases [2], has recently become one of the hottest topics in machine learning. While early works on graph learning go back at least a decade [3] if not two [4], it is undoubtedly the past few years’ progress that has taken these methods from a niche into the spotlight of the ML community and even to the popular science press (with Quanta Magazine running a series of excellent articles on geometric deep learning for the study of manifolds, drug discovery, and protein science).

Graphs are powerful mathematical abstractions that can describe complex systems of relations and interactions in fields ranging from biology and high-energy physics to social science and economics. Since the amount of graph-structured data produced in some of these fields nowadays is enormous (prominent examples being social networks like Twitter and Facebook), it is very tempting to try to apply deep learning techniques that have been remarkably successful in other data-rich settings.

There are multiple flavours to graph learning problems that are largely application-dependent. One dichotomy is between node-wise and graph-wise problems, where in the former one tries to predict properties of individual nodes in the graph (e.g. identify malicious users in a social network), while in the latter one tries to make a prediction about the entire graph (e.g. predict solubility of a molecule). Furthermore, like in traditional ML problems, we can distinguish between supervised and unsupervised (or self-supervised) settings, as well as transductive and inductive problems.

Similarly to convolutional neural networks used in image analysis and computer vision, the key to efficient learning on graphs is designing local operations with shared weights that do message passing [5] between every node and its neighbours. A major difference compared to classical deep neural networks dealing with grid-structured data is that on graphs such operations are permutation-invariant, i.e. independent of the order of neighbour nodes, as there is usually no canonical way of ordering them.

Despite their promise and a series of success stories of graph representation learning (among which I can selfishly list the [acquisition by Twitter] of the graph-based fake news detection startup Fabula AI I have founded together with my students), we have not witnessed so far anything close to the smashing success convolutional networks have had in computer vision. In the following, I will try to outline my views on the possible reasons and how the field could progress in the next few years.

**Standardised benchmarks **like ImageNet were surely one of the key success factors of deep learning in computer vision, with some [6] even arguing that data was more important than algorithms for the deep learning revolution. We have nothing similar to ImageNet in scale and complexity in the graph learning community yet. The [Open Graph Benchmark] launched in 2019 is perhaps the first attempt toward this goal trying to introduce challenging graph learning tasks on interesting real-world graph-structured datasets. One of the hurdles is that tech companies producing diverse and rich graphs from their users’ activity are reluctant to share these data due to concerns over privacy laws such as GDPR. A notable exception is Twitter that made a dataset of 160 million tweets with corresponding user engagement graphs available to the research community under certain privacy-preserving restrictions as part of the [RecSys Challenge]. I hope that many companies will follow suit in the future.

**Software libraries **available in the public domain played a paramount role in “democratising” deep learning and making it a popular tool. If until recently, graph learning implementations were primarily a collection of poorly written and scarcely tested code, nowadays there are libraries such as [PyTorch Geometric] or [Deep Graph Library (DGL)] that are professionally written and maintained with the help of industry sponsorship. It is not uncommon to see an implementation of a new graph deep learning architecture weeks after it appears on arxiv.

Scalability is one of the key factors limiting industrial applications that often need to deal with very large graphs (think of Twitter social network with hundreds of millions of nodes and billions of edges) and low latency constraints. The academic research community has until recently almost ignored this aspect, with many models described in the literature completely inadequate for large-scale settings. Furthermore, graphics hardware (GPU), whose happy marriage with classical deep learning architectures was one of the primary forces driving their mutual success, is not necessarily the best fit for graph-structured data. In the long run, we might need specialised hardware for graphs [7].

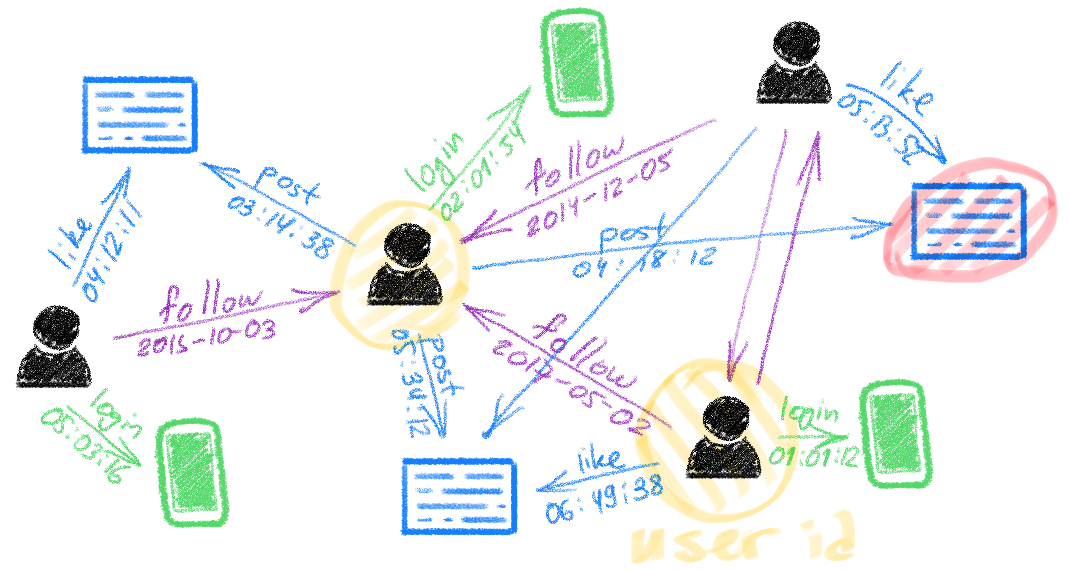

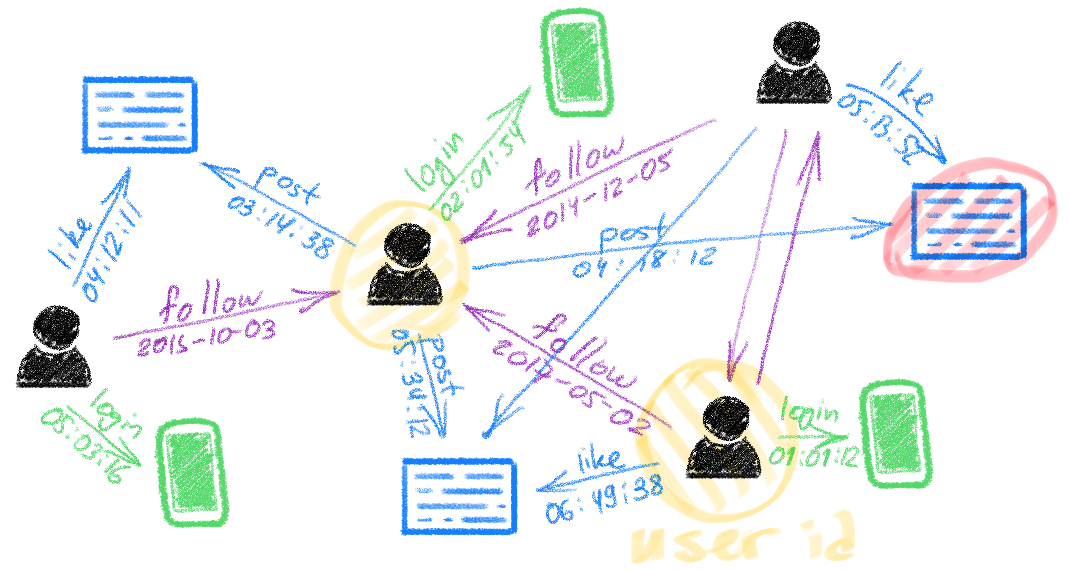

**Dynamic graphs **are another aspect that is scarcely addressed in the literature. While graphs are a common way of modelling complex systems, such an abstraction is often too simplistic as real-world systems are dynamic and evolve in time. Sometimes it is the temporal behaviour that provides crucial insights about the system. Despite some recent progress, designing graph neural network models capable of efficiently dealing with continuous-time graphs represented as a stream of node- or edge-wise events is still an open research question.

#deep-learning #representation-learning #network-science #graph-neural-networks #geometric-deep-learning #deep learning