K-means and Kohonen SOM are two of the most widely applied data clustering algorithms.

Although K-means is a simple vector quantization method and Kohonen SOM is a neural network model, they’re remarkably similar.

In this post, I’ll try to explain, in as plain a language as I can, how each of these unsupervised models works.

K-Means

K-means clustering was introduced to us back in the late 1960s. The goal of the algorithm is to find and group similar data objects into a number (K) of clusters.

By ‘similar’ we mean data points that are both close to each other (in the Euclidean sense) and close to the same cluster center.

The centroids in these clusters move after each iteration during training: for each cluster, the algorithm calculates the weighted average (mean) of all its data points and that becomes the new centroid.

K (the number of clusters) is a tunable hyperparameter. This means it’s not learned and we must set it manually.

This is how K-means is trained:

- We give the algorithm a set of data points and a number K of clusters as input.

- It places K centroids in random places and computes the distances between each data point and each centroid. We can use Euclidean, Manhattan, Cosine, or some other distance measure - the choice will depend on our specific dataset and objective.

- The algorithm assigns each data point to the cluster whose centroid is nearest to it.

- The algorithm recomputes centroid positions. It takes all input vectors in a cluster and averages them out to figure out the new position.

- The algorithm keeps looping through steps 3 and 4 until convergence. Typically, we finish the training phase when the centroids stop moving and datapoints stop changing cluster assignments, or we can just tell the algorithm how many iterations we want.

K-means is easier to implement and faster than most other clustering algorithms, but it has some major flaws. Here are a few of them:

- With K-means, the end result of clustering will largely depend on the initial centroid placement.

- The algorithm is extremely sensitive to outliers and lacks scalability.

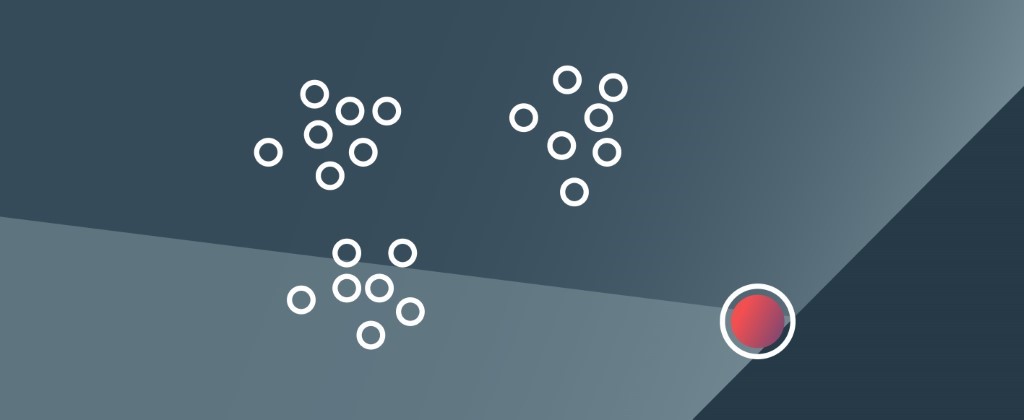

_Outliers, like the one shown here, can really mess up the K-means algorithm. _

_Outliers, like the one shown here, can really mess up the K-means algorithm. _

- We need to specify K and it’s not always obvious what the good value for K is (although there are a few techniques that can help figure out the optimal number of clusters such as the elbow method or the silhouette method)

- K-means only works on numerical variables (obviously, we can’t compute a mean of categorical variables such as ‘bicycle_’, 'car’, ‘horse’, _etc.)

- It performs poorly on high-dimensional data.

**Kohonen Self-Organizing Map **

The Kohonen SOM is an unsupervised neural network commonly used for high-dimensional data clustering.

Although it’s a deep learning model, its architecture, unlike that of most advanced neural nets, is fairly straightforward. It only has three layers.

- Input layer — inputs in an n-dimensional space.

- Weight layer — adjustable weight vectors that belong to the network’s processing units.

- Kohonen Layer — computational layer that consists of processing units organized in a 2D lattice-like structure (or 1D string-like structure.)

SOMs’ distinct property is that they can map high-dimensional input vectors onto spaces with fewer dimensions and preserve datasets’ original topology while doing so.

#machine learning #k-means #som #algorithms