Introduction

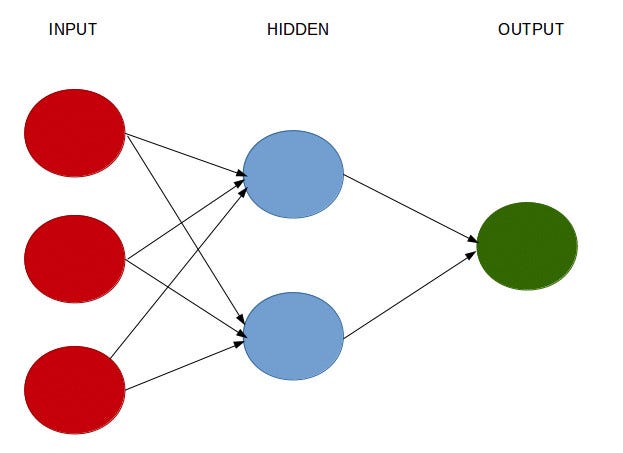

One of the biggest points of contention when designing a neural network is the configuration of the hidden nodes and layers in the model, i.e. how many hidden nodes and layers should be used for a given problem?

Many research papers have been written to address this issue, yet there remains no clear consensus as the answer to this question very much depends on the data being analysed.

The purpose of using hidden layers in the first place is to account for the variation in the output layers that is not fully captured by the features in the input layer.

However, determining the depth of the neural network (number of hidden layers) and the size of each layer (number of nodes) is somewhat an arbitrary process.

Background

Let’s attempt to address this problem using an example: predicting average daily rates (ADR) for hotels. This is the output variable.

The features used in the model are as follows:

1. Cancellations: Whether a customer cancels their booking

2. Country of Origin

3. Market Segment

4. Deposit Paid

5. Customer Type

6. Required Car Parking Spaces

7. Arrival Date: Week Number

This analysis is based on the original study by Antonio, Almeida and Nunes (2016) as cited in the References section below.

Neural Network Configurations

Given that there are **0 **values present for ADR (given that some customers cancel their hotel booking), as well as some negative values (possibly due to refunds), an ELU activation function is used.

Unlike RELU (which is a more standard activation function used in solving regression problems), ELU allows for negative outputs.

There is debate as to the number of hidden nodes that should be used in a hidden layer. For instance, this guide from Cross Validated illustrates several potential approaches to configuring the hidden nodes and layers.

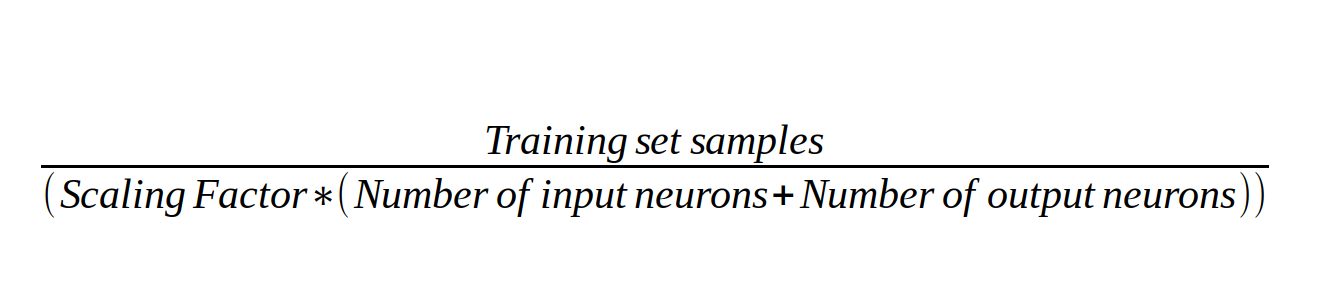

One answer suggests calculating the number of nodes as being a value equal to or below the following in order to prevent overfitting:

Source: Image created by author — formula based on answer from Cross Validated

With 30,045 samples in our training set, a chosen factor of 2, as well as 7 input neurons and 1 output neuron — this gives 1,877 hidden nodes.

To test accuracy, three separate neural networks are run:

- A network with 1 hidden layer and 1,877 hidden nodes

- A network with 1 hidden layer and 4 hidden nodes

- A network with 2 hidden layers and 4 hidden nodes each

Neural Network 1 (1 hidden layer, 1,877 hidden nodes)

Using 1 hidden layer with 1,877 hidden nodes, such a neural network would be configured as follows:

Model: "sequential"

_________________________________________________________________

Layer (type) Output Shape Param #

=================================================================

dense (Dense) (None, 8) 72

_________________________________________________________________

dense_1 (Dense) (None, 1669) 15021

_________________________________________________________________

dense_2 (Dense) (None, 1) 1670

=================================================================

Total params: 16,763

Trainable params: 16,763

Non-trainable params: 0

_________________________________________________________________

#hidden-layers #data-science #neural-networks #machine-learning #function