In this post, we’re gonna take a close look at one of the well-known Graph neural networks named GCN. First, we’ll get the intuition to see how it works, then we’ll go deeper into the maths behind it.

Why Graphs?

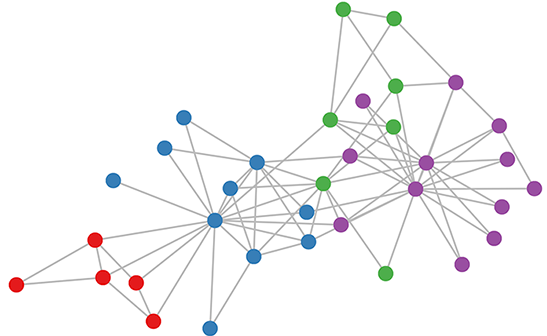

Many problems are graphs in true nature. In our world, we see many data are graphs, such as molecules, social networks, and paper citations networks.

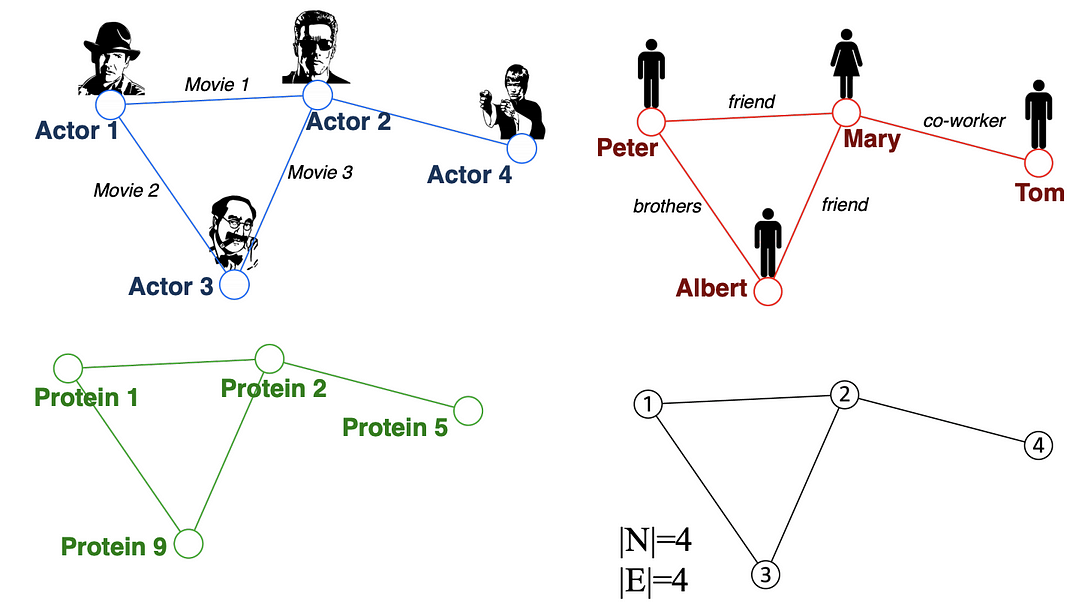

Examples of graphs. (Picture from [1])

Tasks on Graphs

- Node classification: Predict a type of a given node

- Link prediction: Predict whether two nodes are linked

- Community detection: Identify densely linked clusters of nodes

- Network similarity: How similar are two (sub)networks

Machine Learning Lifecycle

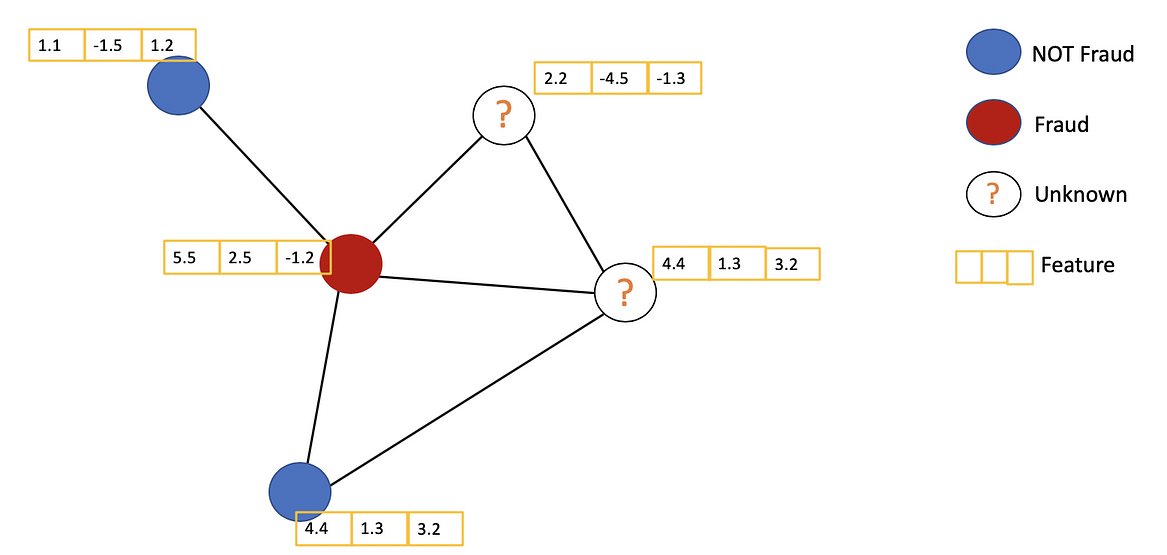

In the graph, we have node features (the data of nodes) and the structure of the graph (how nodes are connected).

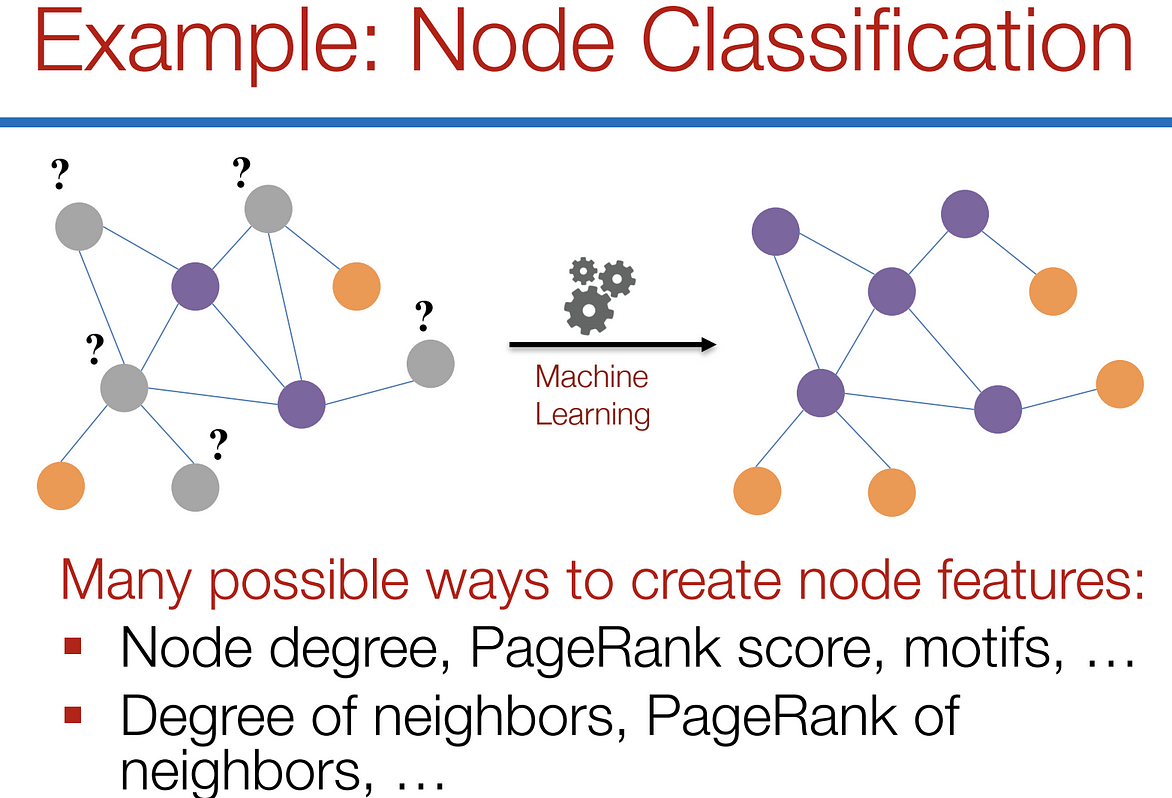

For the former, we can easily get the data from each node. But when it comes to the structure, it is not trivial to extract useful information from it. For example, if 2 nodes are close to one another, should we treat them differently to other pairs? How about high and low degree nodes? In fact, each specific task can consume a lot of time and effort just for Feature Engineering, i.e., to distill the structure into our features.

Feature engineering on graphs. (Picture from [1])

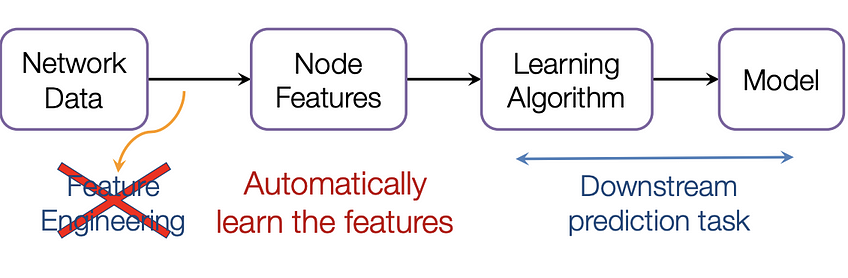

It would be much better to somehow get both the node features and the structure as the input, and let the machine to figure out what information is useful by itself.

That’s why we need Graph Representation Learning.

We want the graph can learn the “feature engineering” by itself. (Picture from [1])

Graph Convolutional Networks (GCNs)

Paper: Semi-supervised Classification with Graph Convolutional Networks(2017) [3]

GCN is a type of convolutional neural network that can work directly on graphs and take advantage of their structural information.

it solves the problem of classifying nodes (such as documents) in a graph (such as a citation network), where labels are only available for a small subset of nodes (semi-supervised learning).

Example of Semi-supervised learning on Graphs. Some nodes dont have labels (unknown nodes).

#graph-neural-networks #graph-convolution-network #deep-learning #neural-networks