Introduction

Art Style Transfer consists in the transformation of an image into a similar one that seems to have been painted by an artist.

If we are Vincent van Gogh fans, and we love German Shepherds, we may like to get a picture of our favorite dog painted in van Gogh’s Starry Night fashion.

Image by author

Starry Night by Vincent van Gogh, Public Domain

The resulting picture can be something like this:

Image by author

Instead, if we like Katsushika Hokusai’s Great Wave off Kanagawa, we may obtain a picture like this one:

The Great wave of Kanagawa by Katsushika Hokusai, Public Domain

Image by author

And something like the following picture, if we prefer Wassily Kandinsky’s Composition 7:

Compositions 7 by Wassily Kandinsky, Public Domain

Image by author

These image transformations are possible thanks to advances in computing processing power that allowed the usage of more complex neural networks.

The Convolutional Neural Networks (CNN), composed of a series of layers of convolutional matrix operations, are ideal for image analysis and object identification. They employ a similar concept to graphic filters and detectors used in applications like Gimp or Photoshop, but in a much powerful and complex way.

A basic example of a matrix operation is performed by an edge detector. It takes a small picture sample of NxN pixels (5x5 in the following example), multiplies it’s values by a predefined NxN convolution matrix and obtains a value that indicates if an edge is present in that portion of the image. Repeating this procedure for all the NxN portions of the image, we can generate a new image where we have detected the borders of the objects present in there.

Image by author

The two main features of CNNs are:

- The numeric values of the convolutional matrices are not predefined to find specific image features like edges. Those values are automatically generated during the optimization processes, so they will be able to detect more complex features than borders.

- They have a layered structure, so the first layers will detect simple image features (edges, color blocks, etc.) and the latest layers will use the information from the previous ones to detect complex objects like people, animals, cars, etc.

This is the typical structure of a Convolutional Neural Network:

Image by Aphex34 / CC BY-SA 4.0

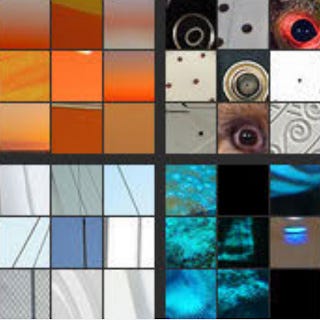

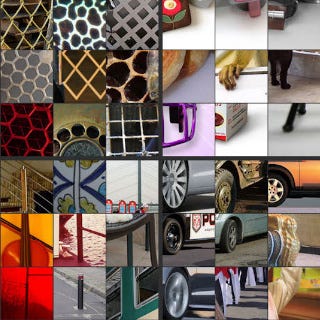

Thanks to papers like “Visualizing and Understanding Convolutional Networks”[1] by Matthew D. Zeiler, Rob Fergus and “Feature Visualization”[12] by Chris Olah, Alexander Mordvintsev, Ludwig Schubert, we can visually understand what features are detected by the different CNN layers:

Image by Matthew D. Zeiler et al. “Visualizing and Understanding Convolutional Networks”[1], usage authorized

The first layers detect the most basic features of the image like edges.

Image by Matthew D. Zeiler et al. “Visualizing and Understanding Convolutional Networks”[1], usage authorized

The next layers combine the information of the previous layer to detect more complex features like textures.

Image by Matthew D. Zeiler et al. “Visualizing and Understanding Convolutional Networks”[1], usage authorized

Following layers, continue to use the previous information to detect features like repetitive patterns.

Image by Matthew D. Zeiler et al. “Visualizing and Understanding Convolutional Networks”[1], usage authorized

The latest network layers are able to detect complex features like object parts.

Image by Matthew D. Zeiler et al. “Visualizing and Understanding Convolutional Networks”[1], usage authorized

The final layers are capable of classifying complete objects present in the image.

The possibility of detecting complex image features is the key enabler to perform complex transformations to those features, but still perceiving the same content in the image.

#style-transfer-online #artificial-intelligence #neural-style-transfer #art-style-transfer #neural networks