With face detection, you can get the information you need to perform tasks like embellishing selfies and portraits, or generating avatars from a user's photo. Because ML Kit can perform face detection in real time, you can use it in applications like video chat or games that respond to the player's expressions.

See the ML Kit quickstart sample on GitHub for an example of this API in use.

Before you begin

- If you haven't already, add Firebase to your Android project.

- In your project-level

build.gradlefile, make sure to include Google's Maven repository in both yourbuildscriptandallprojectssections. - Add the dependencies for the ML Kit Android libraries to your module (app-level) Gradle file (usually

app/build.gradle):

dependencies {

// ...

implementation ‘com.google.firebase:firebase-ml-vision:23.0.0’

implementation ‘com.google.firebase:firebase-ml-vision-face-model:18.0.0’

}

apply plugin: ‘com.google.gms.google-services’

4- Optional but recommended: Configure your app to automatically download the ML model to the device after your app is installed from the Play Store.

To do so, add the following declaration to your app’s AndroidManifest.xml file:

<application …>

…

<meta-data

android:name=“com.google.firebase.ml.vision.DEPENDENCIES”

android:value=“face” />

<!-- To use multiple models: android:value=“face,model2,model3” -->

</application>

If you do not enable install-time model downloads, the model will be downloaded the first time you run the detector. Requests you make before the download has completed will produce no results. Input image guidelines

For ML Kit to accurately detect faces, input images must contain faces that are represented by sufficient pixel data. In general, each face you want to detect in an image should be at least 100x100 pixels. If you want to detect the contours of faces, ML Kit requires higher resolution input: each face should be at least 200x200 pixels.

If you are detecting faces in a real-time application, you might also want to consider the overall dimensions of the input images. Smaller images can be processed faster, so to reduce latency, capture images at lower resolutions (keeping in mind the above accuracy requirements) and ensure that the subject’s face occupies as much of the image as possible. Also see Tips to improve real-time performance.

Poor image focus can hurt accuracy. If you aren’t getting acceptable results, try asking the user to recapture the image.

The orientation of a face relative to the camera can also affect what facial features ML Kit detects. See Face Detection Concepts.

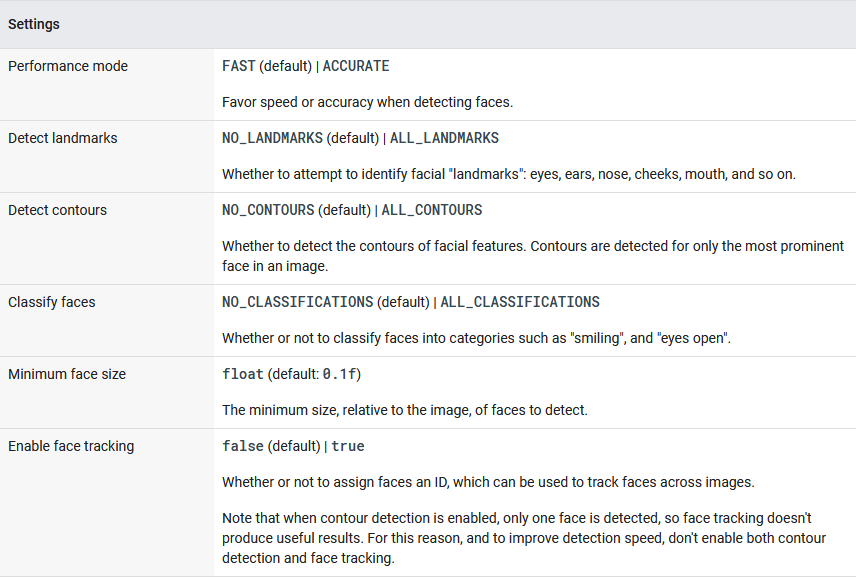

1. Configure the face detector

Before you apply face detection to an image, if you want to change any of the face detector’s default settings, specify those settings with a FirebaseVisionFaceDetectorOptions object. You can change the following settings:

For example:

Java Android

// High-accuracy landmark detection and face classification

FirebaseVisionFaceDetectorOptions highAccuracyOpts =

new FirebaseVisionFaceDetectorOptions.Builder()

.setPerformanceMode(FirebaseVisionFaceDetectorOptions.ACCURATE)

.setLandmarkMode(FirebaseVisionFaceDetectorOptions.ALL_LANDMARKS)

.setClassificationMode(FirebaseVisionFaceDetectorOptions.ALL_CLASSIFICATIONS)

.build();// Real-time contour detection of multiple faces

FirebaseVisionFaceDetectorOptions realTimeOpts =

new FirebaseVisionFaceDetectorOptions.Builder()

.setContourMode(FirebaseVisionFaceDetectorOptions.ALL_CONTOURS)

.build();

FaceDetectionActivity.java

Kotlin Android

// High-accuracy landmark detection and face classification

val highAccuracyOpts = FirebaseVisionFaceDetectorOptions.Builder()

.setPerformanceMode(FirebaseVisionFaceDetectorOptions.ACCURATE)

.setLandmarkMode(FirebaseVisionFaceDetectorOptions.ALL_LANDMARKS)

.setClassificationMode(FirebaseVisionFaceDetectorOptions.ALL_CLASSIFICATIONS)

.build()// Real-time contour detection of multiple faces

val realTimeOpts = FirebaseVisionFaceDetectorOptions.Builder()

.setContourMode(FirebaseVisionFaceDetectorOptions.ALL_CONTOURS)

.build()

FaceDetectionActivity.kt

2. Run the face detector

To detect faces in an image, create a FirebaseVisionImage object from either a Bitmap, media.Image, ByteBuffer, byte array, or a file on the device. Then, pass the FirebaseVisionImage object to the FirebaseVisionFaceDetector's detectInImage method.For face recognition, you should use an image with dimensions of at least 480x360 pixels. If you are recognizing faces in real time, capturing frames at this minimum resolution can help reduce latency.

- Create a

FirebaseVisionImageobject from your image.

- To create a

FirebaseVisionImageobject from amedia.Imageobject, such as when capturing an image from a device’s camera, pass themedia.Imageobject and the image’s rotation toFirebaseVisionImage.fromMediaImage(). - If you use the CameraX library, the

OnImageCapturedListenerandImageAnalysis.Analyzerclasses calculate the rotation value for you, so you just need to convert the rotation to one of ML Kit’sROTATION_constants before callingFirebaseVisionImage.fromMediaImage():

Java Android

private class YourAnalyzer implements ImageAnalysis.Analyzer {private int degreesToFirebaseRotation(int degrees) {

switch (degrees) {

case 0:

return FirebaseVisionImageMetadata.ROTATION_0;

case 90:

return FirebaseVisionImageMetadata.ROTATION_90;

case 180:

return FirebaseVisionImageMetadata.ROTATION_180;

case 270:

return FirebaseVisionImageMetadata.ROTATION_270;

default:

throw new IllegalArgumentException(

“Rotation must be 0, 90, 180, or 270.”);

}

}@Override

public void analyze(ImageProxy imageProxy, int degrees) {

if (imageProxy == null || imageProxy.getImage() == null) {

return;

}

Image mediaImage = imageProxy.getImage();

int rotation = degreesToFirebaseRotation(degrees);

FirebaseVisionImage image =

FirebaseVisionImage.fromMediaImage(mediaImage, rotation);

// Pass image to an ML Kit Vision API

// …

}

}

Kotlin Android

private class YourImageAnalyzer : ImageAnalysis.Analyzer {

private fun degreesToFirebaseRotation(degrees: Int): Int = when(degrees) {

0 -> FirebaseVisionImageMetadata.ROTATION_0

90 -> FirebaseVisionImageMetadata.ROTATION_90

180 -> FirebaseVisionImageMetadata.ROTATION_180

270 -> FirebaseVisionImageMetadata.ROTATION_270

else -> throw Exception(“Rotation must be 0, 90, 180, or 270.”)

}override fun analyze(imageProxy: ImageProxy?, degrees: Int) {

val mediaImage = imageProxy?.image

val imageRotation = degreesToFirebaseRotation(degrees)

if (mediaImage != null) {

val image = FirebaseVisionImage.fromMediaImage(mediaImage, imageRotation)

// Pass image to an ML Kit Vision API

// …

}

}

}

If you don’t use a camera library that gives you the image’s rotation, you can calculate it from the device’s rotation and the orientation of camera sensor in the device:

Java Android

private static final SparseIntArray ORIENTATIONS = new SparseIntArray();

static {

ORIENTATIONS.append(Surface.ROTATION_0, 90);

ORIENTATIONS.append(Surface.ROTATION_90, 0);

ORIENTATIONS.append(Surface.ROTATION_180, 270);

ORIENTATIONS.append(Surface.ROTATION_270, 180);

}/**

* Get the angle by which an image must be rotated given the device’s current

* orientation.

*/

@RequiresApi(api = Build.VERSION_CODES.LOLLIPOP)

private int getRotationCompensation(String cameraId, Activity activity, Context context)

throws CameraAccessException {

// Get the device’s current rotation relative to its “native” orientation.

// Then, from the ORIENTATIONS table, look up the angle the image must be

// rotated to compensate for the device’s rotation.

int deviceRotation = activity.getWindowManager().getDefaultDisplay().getRotation();

int rotationCompensation = ORIENTATIONS.get(deviceRotation);// On most devices, the sensor orientation is 90 degrees, but for some

// devices it is 270 degrees. For devices with a sensor orientation of

// 270, rotate the image an additional 180 ((270 + 270) % 360) degrees.

CameraManager cameraManager = (CameraManager) context.getSystemService(CAMERA_SERVICE);

int sensorOrientation = cameraManager

.getCameraCharacteristics(cameraId)

.get(CameraCharacteristics.SENSOR_ORIENTATION);

rotationCompensation = (rotationCompensation + sensorOrientation + 270) % 360;// Return the corresponding FirebaseVisionImageMetadata rotation value.

int result;

switch (rotationCompensation) {

case 0:

result = FirebaseVisionImageMetadata.ROTATION_0;

break;

case 90:

result = FirebaseVisionImageMetadata.ROTATION_90;

break;

case 180:

result = FirebaseVisionImageMetadata.ROTATION_180;

break;

case 270:

result = FirebaseVisionImageMetadata.ROTATION_270;

break;

default:

result = FirebaseVisionImageMetadata.ROTATION_0;

Log.e(TAG, "Bad rotation value: " + rotationCompensation);

}

return result;

}

VisionImage.java

Kotlin Android

private val ORIENTATIONS = SparseIntArray()init {

ORIENTATIONS.append(Surface.ROTATION_0, 90)

ORIENTATIONS.append(Surface.ROTATION_90, 0)

ORIENTATIONS.append(Surface.ROTATION_180, 270)

ORIENTATIONS.append(Surface.ROTATION_270, 180)

}

/**

* Get the angle by which an image must be rotated given the device’s current

* orientation.

*/

@RequiresApi(api = Build.VERSION_CODES.LOLLIPOP)

@Throws(CameraAccessException::class)

private fun getRotationCompensation(cameraId: String, activity: Activity, context: Context): Int {

// Get the device’s current rotation relative to its “native” orientation.

// Then, from the ORIENTATIONS table, look up the angle the image must be

// rotated to compensate for the device’s rotation.

val deviceRotation = activity.windowManager.defaultDisplay.rotation

var rotationCompensation = ORIENTATIONS.get(deviceRotation)// On most devices, the sensor orientation is 90 degrees, but for some

// devices it is 270 degrees. For devices with a sensor orientation of

// 270, rotate the image an additional 180 ((270 + 270) % 360) degrees.

val cameraManager = context.getSystemService(CAMERA_SERVICE) as CameraManager

val sensorOrientation = cameraManager

.getCameraCharacteristics(cameraId)

.get(CameraCharacteristics.SENSOR_ORIENTATION)!!

rotationCompensation = (rotationCompensation + sensorOrientation + 270) % 360// Return the corresponding FirebaseVisionImageMetadata rotation value.

val result: Int

when (rotationCompensation) {

0 -> result = FirebaseVisionImageMetadata.ROTATION_0

90 -> result = FirebaseVisionImageMetadata.ROTATION_90

180 -> result = FirebaseVisionImageMetadata.ROTATION_180

270 -> result = FirebaseVisionImageMetadata.ROTATION_270

else -> {

result = FirebaseVisionImageMetadata.ROTATION_0

Log.e(TAG, “Bad rotation value: $rotationCompensation”)

}

}

return result

}

VisionImage.kt

Then, pass the media.Image object and the rotation value to FirebaseVisionImage.fromMediaImage():

Java Android

FirebaseVisionImage image = FirebaseVisionImage.fromMediaImage(mediaImage, rotation);

VisionImage.java

Kotlin Android

val image = FirebaseVisionImage.fromMediaImage(mediaImage, rotation)

VisionImage.kt

- To create a

FirebaseVisionImageobject from a file URI, pass the app context and file URI toFirebaseVisionImage.fromFilePath(). This is useful when you use anACTIONGETCONTENTintent to prompt the user to select an image from their gallery app.

Java Android

FirebaseVisionImage image;

try {

image = FirebaseVisionImage.fromFilePath(context, uri);

} catch (IOException e) {

e.printStackTrace();

}

VisionImage.java

Kotlin Android

val image: FirebaseVisionImage

try {

image = FirebaseVisionImage.fromFilePath(context, uri)

} catch (e: IOException) {

e.printStackTrace()

}

VisionImage.kt

- To create a

FirebaseVisionImageobject from aByteBufferor a byte array, first calculate the image rotation as described above formedia.Imageinput.

Then, create a FirebaseVisionImageMetadata object that contains the image’s height, width, color encoding format, and rotation:

Java Android

FirebaseVisionImageMetadata metadata = new FirebaseVisionImageMetadata.Builder()

.setWidth(480) // 480x360 is typically sufficient for

.setHeight(360) // image recognition

.setFormat(FirebaseVisionImageMetadata.IMAGE_FORMAT_NV21)

.setRotation(rotation)

.build();

VisionImage.java

Kotlin Android

val metadata = FirebaseVisionImageMetadata.Builder()

.setWidth(480) // 480x360 is typically sufficient for

.setHeight(360) // image recognition

.setFormat(FirebaseVisionImageMetadata.IMAGE_FORMAT_NV21)

.setRotation(rotation)

.build()

VisionImage.kt

- Use the buffer or array, and the metadata object, to create a

FirebaseVisionImageobject:

Java Android

FirebaseVisionImage image = FirebaseVisionImage.fromByteBuffer(buffer, metadata);

// Or: FirebaseVisionImage image = FirebaseVisionImage.fromByteArray(byteArray, metadata);

VisionImage.java

Kotlin Android

val image = FirebaseVisionImage.fromByteBuffer(buffer, metadata)

// Or: val image = FirebaseVisionImage.fromByteArray(byteArray, metadata)

VisionImage.kt

- To create a

FirebaseVisionImageobject from aBitmapobject:

Java Android

FirebaseVisionImage image = FirebaseVisionImage.fromBitmap(bitmap);

VisionImage.java

Kotlin Android

val image = FirebaseVisionImage.fromBitmap(bitmap)

VisionImage.kt

The image represented by the Bitmap object must be upright, with no additional rotation required.

2 - Get an instance of FirebaseVisionFaceDetector:

Java Android

FirebaseVisionFaceDetector detector = FirebaseVision.getInstance()

.getVisionFaceDetector(options);

FaceDetectionActivity.java

Kotlin Android

val detector = FirebaseVision.getInstance()

.getVisionFaceDetector(options)

FaceDetectionActivity.kt

3 - Finally, pass the image to the detectInImage method:

Java Android

Task<List<FirebaseVisionFace>> result =

detector.detectInImage(image)

.addOnSuccessListener(

new OnSuccessListener<List<FirebaseVisionFace>>() {

@Override

public void onSuccess(List<FirebaseVisionFace> faces) {

// Task completed successfully

// …

}

})

.addOnFailureListener(

new OnFailureListener() {

@Override

public void onFailure(@NonNull Exception e) {

// Task failed with an exception

// …

}

});

FaceDetectionActivity.java

Kotlin Android

val result = detector.detectInImage(image)

.addOnSuccessListener { faces ->

// Task completed successfully

// …

}

.addOnFailureListener(

object : OnFailureListener {

override fun onFailure(e: Exception) {

// Task failed with an exception

// …

}

})

FaceDetectionActivity.kt

3. Get information about detected faces

If the face recognition operation succeeds, a list of FirebaseVisionFace objects will be passed to the success listener. Each FirebaseVisionFace object represents a face that was detected in the image. For each face, you can get its bounding coordinates in the input image, as well as any other information you configured the face detector to find. For example:

Java Android

for (FirebaseVisionFace face : faces) {

Rect bounds = face.getBoundingBox();

float rotY = face.getHeadEulerAngleY(); // Head is rotated to the right rotY degrees

float rotZ = face.getHeadEulerAngleZ(); // Head is tilted sideways rotZ degrees// If landmark detection was enabled (mouth, ears, eyes, cheeks, and

// nose available):

FirebaseVisionFaceLandmark leftEar = face.getLandmark(FirebaseVisionFaceLandmark.LEFT_EAR);

if (leftEar != null) {

FirebaseVisionPoint leftEarPos = leftEar.getPosition();

}// If contour detection was enabled:

List<FirebaseVisionPoint> leftEyeContour =

face.getContour(FirebaseVisionFaceContour.LEFT_EYE).getPoints();

List<FirebaseVisionPoint> upperLipBottomContour =

face.getContour(FirebaseVisionFaceContour.UPPER_LIP_BOTTOM).getPoints();// If classification was enabled:

if (face.getSmilingProbability() != FirebaseVisionFace.UNCOMPUTED_PROBABILITY) {

float smileProb = face.getSmilingProbability();

}

if (face.getRightEyeOpenProbability() != FirebaseVisionFace.UNCOMPUTED_PROBABILITY) {

float rightEyeOpenProb = face.getRightEyeOpenProbability();

}// If face tracking was enabled:

if (face.getTrackingId() != FirebaseVisionFace.INVALID_ID) {

int id = face.getTrackingId();

}

}

FaceDetectionActivity.java

Kotlin Android

for (face in faces) {

val bounds = face.boundingBox

val rotY = face.headEulerAngleY // Head is rotated to the right rotY degrees

val rotZ = face.headEulerAngleZ // Head is tilted sideways rotZ degrees// If landmark detection was enabled (mouth, ears, eyes, cheeks, and

// nose available):

val leftEar = face.getLandmark(FirebaseVisionFaceLandmark.LEFT_EAR)

leftEar?.let {

val leftEarPos = leftEar.position

}// If contour detection was enabled:

val leftEyeContour = face.getContour(FirebaseVisionFaceContour.LEFT_EYE).points

val upperLipBottomContour = face.getContour(FirebaseVisionFaceContour.UPPER_LIP_BOTTOM).points// If classification was enabled:

if (face.smilingProbability != FirebaseVisionFace.UNCOMPUTED_PROBABILITY) {

val smileProb = face.smilingProbability

}

if (face.rightEyeOpenProbability != FirebaseVisionFace.UNCOMPUTED_PROBABILITY) {

val rightEyeOpenProb = face.rightEyeOpenProbability

}// If face tracking was enabled:

if (face.trackingId != FirebaseVisionFace.INVALID_ID) {

val id = face.trackingId

}

}

FaceDetectionActivity.kt

Example of face contours

When you have face contour detection enabled, you get a list of points for each facial feature that was detected. These points represent the shape of the feature. See the Face Detection Concepts Overview for details about how contours are represented.

The following image illustrates how these points map to a face (click the image to enlarge):

Real-time face detection

If you want to use face detection in a real-time application, follow these guidelines to achieve the best framerates:

- Configure the face detector to use either face contour detection or classification and landmark detection, but not both:

- Contour detection

- Landmark detection

- Classification

- Landmark detection and classification

- Contour detection and landmark detection

- Contour detection and classification

- Contour detection, landmark detection, and classification

- Enable

FASTmode (enabled by default). - Consider capturing images at a lower resolution. However, also keep in mind this API’s image dimension requirements.

- Throttle calls to the detector. If a new video frame becomes available while the detector is running, drop the frame. See the

VisionProcessorBaseclass in the quickstart sample app for an example. - If you are using the output of the detector to overlay graphics on the input image, first get the result from ML Kit, then render the image and overlay in a single step. By doing so, you render to the display surface only once for each input frame. See the

CameraSourcePreviewandGraphicOverlayclasses in the quickstart sample app for an example. - If you use the Camera2 API, capture images in

ImageFormat.YUV_420_888format. - If you use the older Camera API, capture images in

ImageFormat.NV21format.

#firebase #machine-learning #deep-learning #data-science #image