I am a big fan of the Ben 10 Series and I have always wondered why Ben’s Omnitrix fails to change into an alien that Ben chooses to be(This is largely due to a weak A.I system already built into the watch). To help Ben, We will devise “OmniNet”, a neural network capable of predicting an appropriate alien according to the given situation.

As discussed on the show, the Omnitrix is basically a server that connects to the Planet Primus to harness the DNA of around 10000 aliens! If I was the engineer of the device, I would certainly add one or more features to the watch.

Primus located somewhere deep in space.

Why and How?

W

hy: The Omnitrix/Ultimatrix is a special case as it is not aware of the surrounding environment. As per Azmuth and others, the Omnitrix sometimes gives the wrong transformation because of its own Artificial Intelligence System that gets the classification wrong. Let us imagine that Azmuth the creator has hired us to build a new Artificial Intelligence/ML system.

H

ow: To build our system, we require some form of initial data, and thanks to our fellow Earthlings, we have Kaggle’s Ben 10 Dataset.

Requirements

For any project, we need a good requirement list and for this project we need:

- Python 3.8: The Programming Language

- Scikit-Learn: The General Machine Learning

- Pandas: For Data Analysis

- Numpy: For numerical computations

- TensorFlow: To Build our Deep Neural Network

- Matplotlib: To graph our progress

Data Preprocessing

O

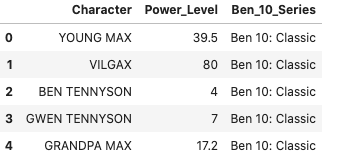

ur dataset consists of three columns and 97 rows. The columns are pretty self-explanatory and on analyzing the data, we immediately turn all the categorical representations into numerical representations.

- Character: The Ben 10 Series Character

- Power Level: Power Level of a character

- Ben 10 Series: The series associated with the character

Dataset

lr = LabelEncoder()

def convert_id_to_category(index, mapping=None):

"""

Convert the id into the label

Arguments:

mappping: The Mapping built by the LabelEncoder

id: id corresponding to the label

Returns:

str: the label

"""

return mapping.get(index, False)

def convert_to_mapping(lr:LabelEncoder):

"""

Extract the mapping from the LabelEncoder

Returns:

Dict: key/value for the label encoded

"""

mapping = dict(list(zip(lr.transform(lr.classes_),lr.classes_)))

return mapping

def get_power_level_mapping(df=None):

mapping = {}

for i in range(0, len(df)):

mapping[df.loc[i].Character] = df.loc[i].Power_Level

vtok = {}

for i,j in enumerate(mapping):

vtok[mapping[j]] = j

return mapping, vtok

## Ben_10_Series

df['Ben_10_Series'] = lr.fit_transform(df['Ben_10_Series'])

mapping_ben_10_series = convert_to_mapping(lr)

df['Character'] = lr.fit_transform(df['Character'])

mapping_character = convert_to_mapping(lr)

print ("Length [Ben_10_Series]: {}".format(len(mapping_ben_10_series)))

print ("Length [Character]: {}".format(len(mapping_character)))

def remove_string_powerlevel(df=None):

"""

Replaces the string format of power level into an integer. (Manually checked the data)

Arguments:

df: Pandas DataFrame

Returns

None

"""

## lowe bound

df.loc[28, "Power_Level"] = "265"

df.loc[93, "Power_Level"] = "12.5"

df.loc[51, "Power_Level"] = "195"

df.loc[52, "Power_Level"] = "160"

df.loc[62, "Power_Level"] = "140"

df.loc[67, "Power_Level"] = "20"

df['Power_Level'] = df['Power_Level'].str.replace(",","")

## converting power_level to float

df['Power_Level'] = df['Power_Level'].astype(float)

df['Character'] = df['Character'].astype(int)

remove_string_powerlevel(df)

In

addition to changing the categorical representations, we cleaned the data (column: Power_Level) as it contained some levels that were texts. The column also contained commas so we cleaned that as well.

#machine-learning #pandas #python-numpy #tensorflow #data-science #deep learning