Artificial neural network (ANN) is a machine learning modeling technique that uses the concepts of human brain to build computer programs that can learn to solve problems. This paper explains the calculations involved in training a neural network.

Node

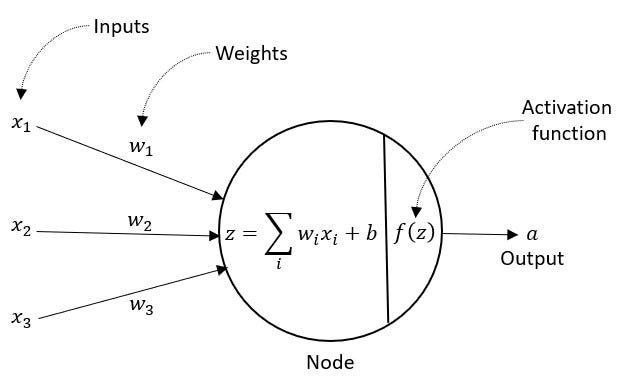

An artificial neural network consists of interconnected nodes which are organized in layers. A node, like a neuron in human brain, is the basic building block of a neural network. Figure 1 shows a single node. A node receives inputs either from data in the input layer or from outputs of other nodes, called activations. Each input into a node is associated with weight (w). A weight represents the magnitude of influence of an input on the node. The node computes a weighted sum of the inputs and then applies an activation function (explained below) to determine the output of the node.

Figure 1. Neural Network Node

Activation Function

In a neural network, an activation function is a non-linear transformation of input values which is required to enable modeling of complex tasks. As shown in figure 1, the weighted sum of inputs

is a linear transformation. This linear value z is then passed through an activation function f(z) which is non-linear. In the field of machine learning, several activation functions are commonly used such as Sigmoid, TanH, ReLU, and Softmax. Figure 2 shows a sigmoid function which converts linear values to a value between 0 and 1.

Figure 2. Sigmoid Function

Loss Function

A loss function in neural network measures the deviation of the estimated values from the actual values. The optimal model parameters (weights) are obtained by minimizing the total loss value. A loss function used depends on the goal of the model. If the goal is to predict, the loss function commonly used is Mean Squared Error (MSE).

where y is the true value and ŷ is the predicted value.

If the goal is to classify, a binary cross entropy loss function is used for a binary class, and a cross entropy loss function is used for a multi-class classification.

Where k is the number of classes

#machine-learning #neural-networks #mathematics