Introduction

Logistic Regression is an omnipresent and extensively used algorithm for classification. It is a classification model, very easy to use and its performance is superlative in linearly separable class. This is based on the probability for a sample to belong to a class. Here probabilities must be continuous and bounded between (0, 1). It is dependent on a threshold function to make a decision that is called Sigmoid or Logistic function.

To understand the concept of Logistic Regression, it is important to understand the concept of Odd Ration (OR), Logit function, Sigmoid function or Logistic function, and Cross-entropy or Log Loss.

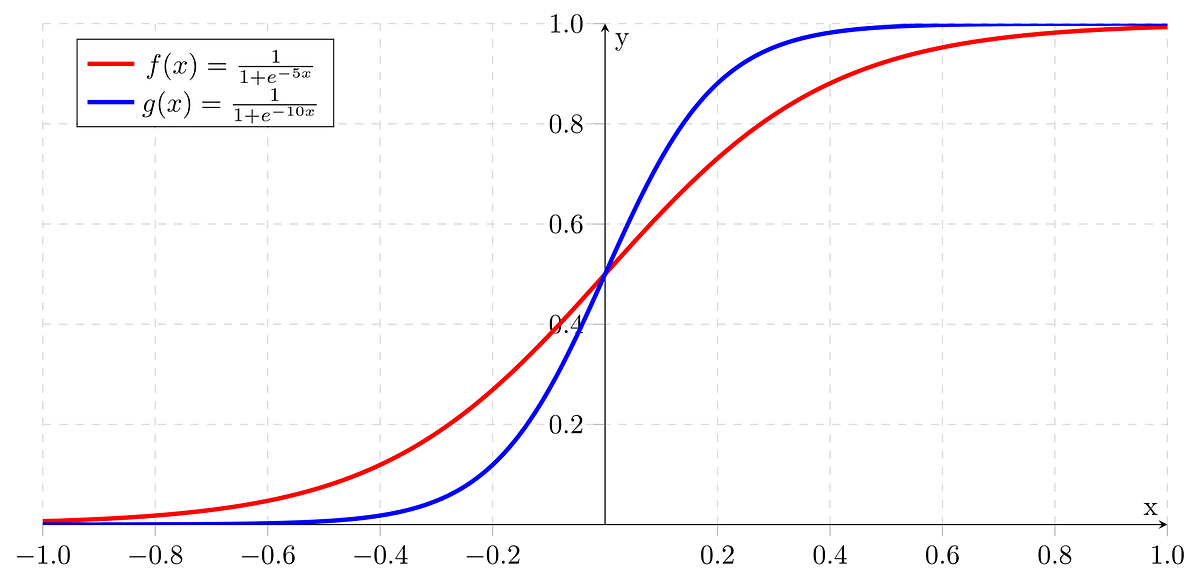

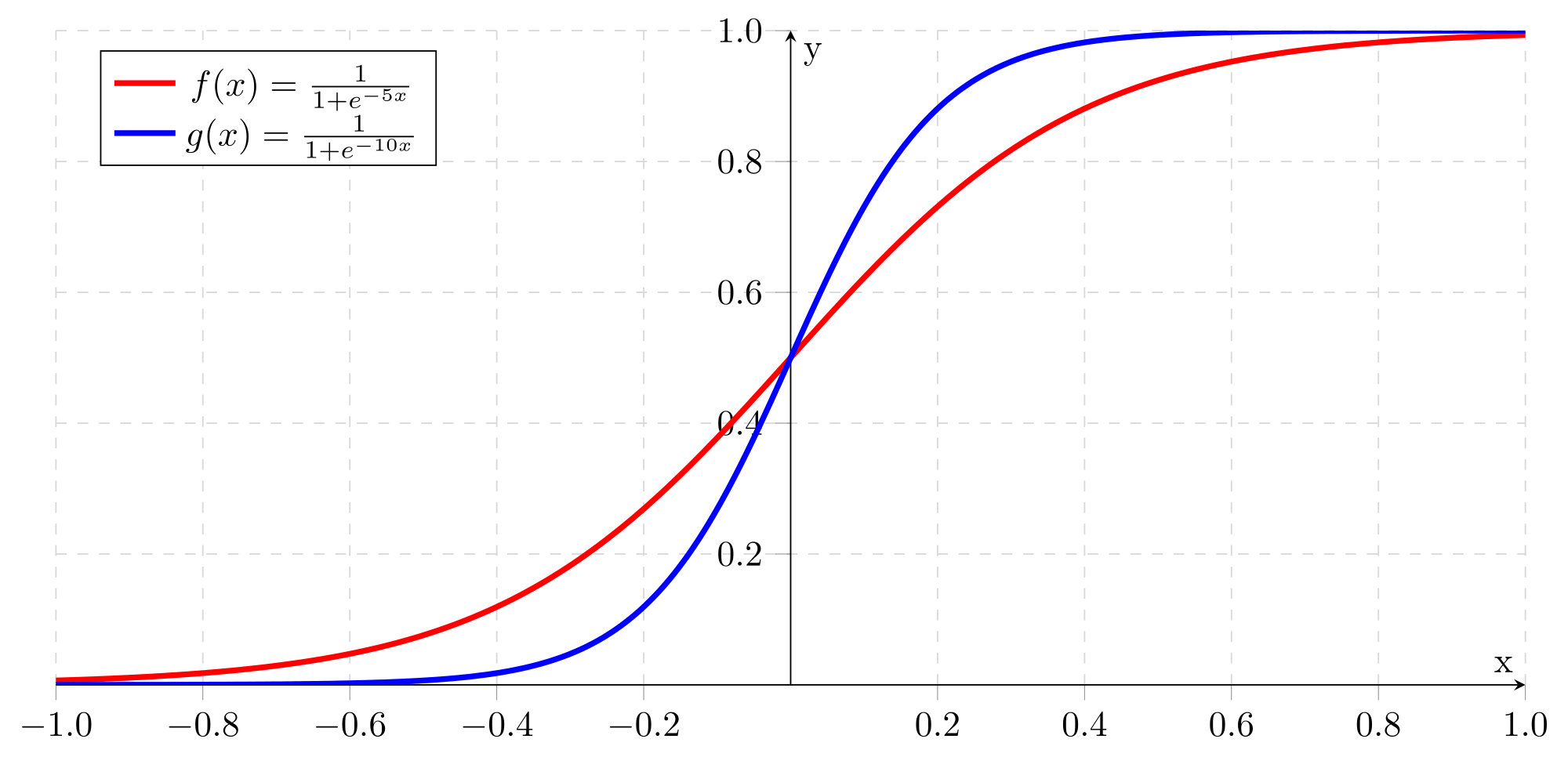

Sigmoid

Odds Ratio (OR)

Odds Ration (OR) is the odds in favor of a particular event. It is a measure of association between exposure and outcome.

Lets X is the probability of subjects affected and Yis a probability of subjects not affected, then, odds = X/Y

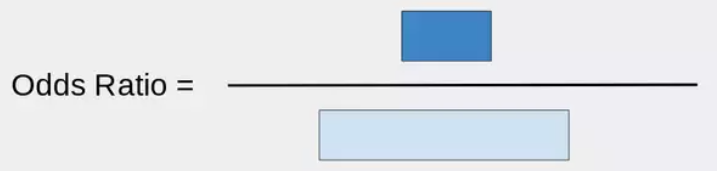

Odds Ratio

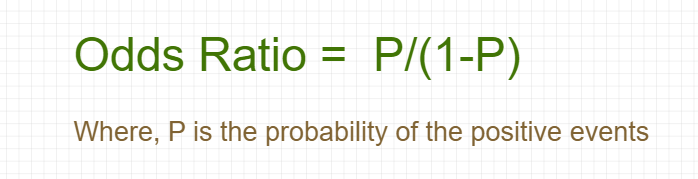

The formula of Odds Ratio:

Odds Ration equation

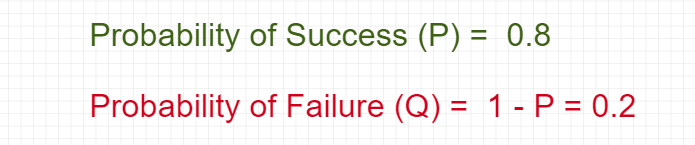

Let’s take the probability range between 0 & 1. Let’s say…

Probability of Success and Failure

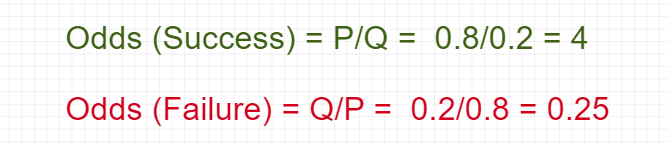

Odds are the ratio of the probability of success and the probability of failure.

Odds of Success and Failure

Problem Statement

Suppose that 7 out of 10 boys are admitted to Data Science while 3 of 10 girls are admitted. Find the probability of boys getting admitted to Data Science?

Solution

Lets P is the probability of getting admitted and **Q **is the probability of not getting admitted.

#mathematics #probability #machine-learning #logistic-regression #data-science #deep learning